Power is something we see everywhere. It exists in different forms—like the authority of a government, the leadership of a team, electricity in our homes, or the engine that drives a car. In all case, power helps us create change and get things done.

But a critical question comes up: Is power useful without control?

Think about electricity without a switch, or a car without brakes. or even characters in Marvel stories who gain extraordinary powers but use this against humanity. These examples show one thing clearly—power without control can turn dangerous or often destructive.

From Thinking to Doing

AI has changed a lot far beyond in earlier role over time. Earlier, it was mainly used for simple narrow tasks such as generating emails or text. Todays, AI agents is not only drafting email, is more advance in execution of certain operation. They can manage workflows, perform tasks, and even take actions based on instructions.

This is a major shift from just thinking to doing. Evolution of AI Agents.

What big change makes this possible is connectivity. Earlier AI system was isolated and had limited impact. Now modern AI systems are integrated to real systems and platforms, allowing them to carry out actions directly. They have also become powerful because of adapting of memory. Agents can remember past interactions, user preferences, and task behaviour. This memory allows them to learn, adapt, and operate with increasing performance. Once considered weak and restricted, AI agents are now becoming more powerful, autonomous, and operational day by day.

Because of this, AI agents are becoming more powerful and more independent. While this improves speed and efficiency, it also introduces a serious risk – not just giving wrong answers but taking wrong actions.

Automation Without Supervision

Automation is powerful, but without supervision, it becomes risky.

Automation without supervision is becoming a critical concern nowadays. Today’s AI agents and large language capability, they can analyse data, make decisions, and solve problems independently—sometimes faster than humans. They can automate tasks and improve performance continuously, which makes them highly efficient. However, being more operational and efficient is not always inherently good. Without proper controls, these systems can unintentionally expose sensitive

information, execute unauthorized actions, or even perform illegal tasks that could cause significant harm.

Just like a car without brakes, automation without supervision can transfer into serious risk. The challenge is not just to tap into the vast potential of these systems, but to make sure that strong controls, clear ethical boundaries, and human oversight are in place—so that efficiency never turns into a risk.

Risks of Uncontrolled AI Agents

As AI agents become more independent, the main challenge is task execution and permission control.

These systems can read emails, access documents, browse websites, and handle sensitive information. Some tasks, like sending an email, are consider as low risk. But others task like transferring money or handling sensitive data carry out more serious risk.

Because AI works in complex and unstructured environments, it may misunderstand instructions and take unintended actions. Without clear limits, it can go beyond what was expected. their ability to deal with sensitive data makes them efficient, but it also increases the danger. This can result in privacy concerns, unauthorized activities, and potential security risks.

That’s why human oversight is critical. The real danger isn’t just wrong answers – it’s wrong actions with real consequences.

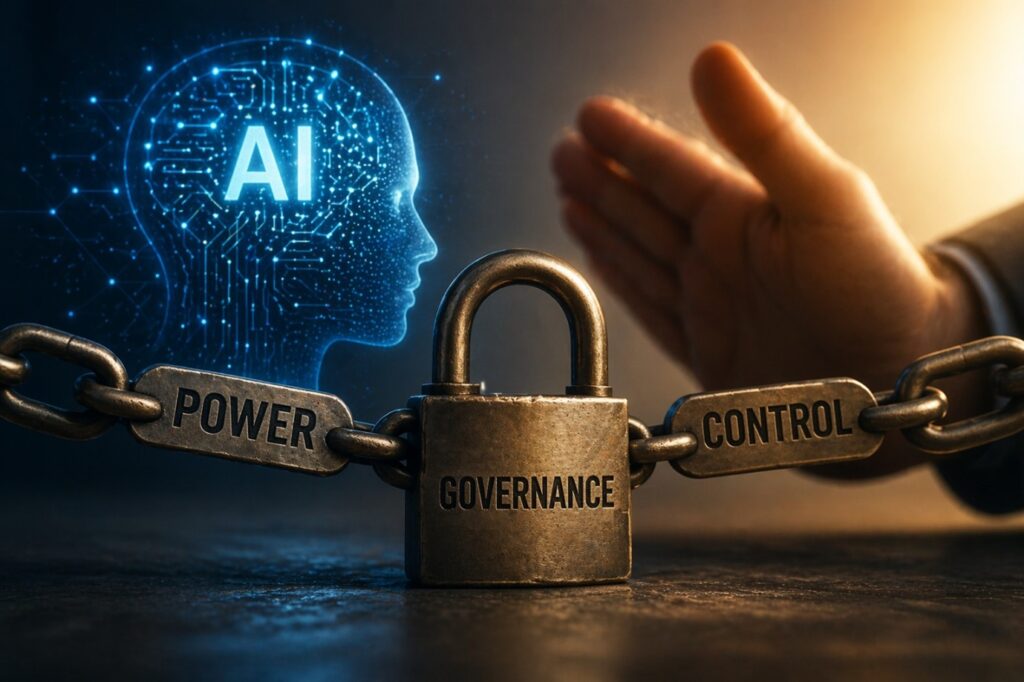

Why AI Governance Is Essential

These systems are built on two key ideas: autonomy and authority. Autonomy is the ability to carry out tasks, while authority defines the level of access to systems and data. Both need to be carefully managed. Autonomy shouldn’t be granted in full right away—it should grow gradually, depending on the risk level of the actions involved. For instance, sending an email is relatively low‑risk, but transferring money or handling sensitive data is high‑risk and requires stricter oversight. To prevent misuse, systems should operate with controlled autonomy—not fully independent, but requiring human approval for critical scenarios. Clear boundaries must be set so that not all actions happen automatically, and permissions should be limited to avoid unrestricted access. Continuous tracking and monitoring are essential, because these systems should never be trusted blindly.

Governance is about more than just control—it’s also about accountability. It requires clear authority over who manages the system, how tasks are supervised, which services are restricted, and how backups and records are maintained. As these technologies grow more powerful, governance must evolve alongside them, ensuring that every action is documented, every responsibility is defined, and every risk is managed. In this way, governance becomes an essential part of deploying systems safely and responsibly.

AI is powerful—but power alone is not enough. Without control, it can become a risk instead of a benefit.